By Rob Sacks, Editor at LinkedIn News — Your face or fingerprint could be your ticket to clearing airport security in 2024. Passports are being replaced by biometrics, physical characteristics unique to every person, that will help an increasing number of passengers get to their planes more quickly. The program, which the Transportation Security Administration […]

by Microsoft research — The Global Health Drug Discovery Institute (GHDDI) (opens in new tab) and Microsoft Research recently achieved significant progress in accelerating drug discovery for the treatment of global infectious diseases. Working in close collaboration, the joint team successfully used generative AI and foundation models to design several small molecule inhibitors for essential target proteins of Mycobacterium tuberculosis and coronaviruses. These new inhibitors show outstanding bioactivities, comparable to or surpassing the best-known lead compounds.

This breakthrough is a testament to the team’s combined efforts in generative AI, molecular physicochemical modeling, and iterative feedback loops between scientists and AI technologies. Normally, the discovery and in vitro confirmation of such molecules could take up to several years, but with the acceleration of AI, the joint team achieved these new results in just five months. This research also shows the tremendous potential of AI for helping scientists discover or create the building blocks needed to develop effective treatments for infectious diseases that continue to threaten the health and lives of people around the world. Since 2019, for example, there have been more than 772 million confirmed cases of COVID-19 worldwide and nearly 7 million deaths from the virus, according to the World Health Organization (WHO), the Centers for Disease Control, and various other sources. Although vaccines have reduced the incidence and deadliness of the disease, the coronavirus continues to mutate and evolve, making it a serious ongoing threat to global health. Meanwhile, the WHO reports that tuberculosis continues to be a leading cause of death among infectious diseases, second only to COVID-19 in 2022, when 10.6 million people worldwide fell ill with TB and the disease killed 1.3 million (the most recent figures currently available).

By Darrell Etherington@etherington — techcrunch — Elon Musk’s Optimus humanoid robot from Tesla is doing more stuff — this time folding a t-shirt on a table in a development facility. The robot looks to be fairly competent when it comes to this task, but moments after Musk shared the video, he also shared some follow-up information which definitely dampens some of the enthusiasm for the robot’s domestic feat. First, I can definitely fold shirts faster than that. Second, Optimus wasn’t acting autonomously, which is obviously the end goal. Instead, the robot is here acting like a very expensive marionette, or at best a modern facsimile of the first rudimentary automotons, going through prescribed motions to accomplish its task. Musk said that eventually, it will “certainly be able to do this fully autonomously,” however, and without the highly artificial constraints in place for this demo, including the fixed height table and single article of clothing in the carefully placed basket.

Tesla has shown off a fair bit of technical wizardry with recent highlight reels released by the company, but the likely scenario is that all of these are highly scripted and pre-programmed activities that do more to show off the impressive functionality of the bot’s joints, servos and limbs than its artificial intelligence. Elon’s caveat, when considered for even a second, actually amounts to “all the very hard things will happen later.” Not to knock the difficulty in creating a humanoid machine that can manipulate soft materials like clothing in a manner approximating human interaction with said objects; that’s some might fine animatronics work. But suggesting that this puts them anywhere near the realm where Optimus will be operating as a fully-functional domestic servant with all the capabilities of a human domestic worker it might replace would be like showing a video of a wooden marionette and adding ‘of course, this will be a real boy soon.’

MIT Technology Review by Abdullahi Tsanni — One-third of US adults have obesity, a condition that makes them more susceptible to heart disease, diabetes, and cancer. Anti-obesity drugs—including Wegovy and Mounjaro—could help address this public health crisis. Success stories are everywhere online, from Reddit to TikTok. Novo Nordisk, the company behind two of the popular […]

MIT Technology Review by Cassandra Willyard — Therapies to treat brain diseases share a common problem: they struggle to reach their target. The blood vessels that permeate the brain have a special lining so tightly packed with cells that only very tiny molecules can pass through. This blood-brain barrier “acts as a seal,” protecting the brain from toxins or other harmful substances, says Anne Eichmann, a molecular biologist at Yale. But it also keeps most medicines out. Researchers have been working on methods to sneak drugs past the blood-brain barrier for decades. And their hard work is finally beginning to pay off. Last week, researchers at the West Virginia University Rockefeller Neuroscience Institute reported that by using focused ultrasound to open the blood-brain barrier, they improved delivery of a new Alzheimer’s treatment and sped up clearance of the sticky plaques that are thought to contribute to some of the cognitive and memory problems in people with Alzheimer’s by 32%. For this issue of The Checkup, we’ll explore some of the ways scientists are trying to disrupt the blood-brain barrier. A patient surrounded by a medical team lays on the bed of an MRI machine with their head in a special focused ultrasound helmet

In the West Virginia study, three people with mild Alzheimer’s received monthly doses of aducanumab, a lab-made antibody that is delivered via IV. This drug, first approved in 2021, helps clear away beta-amyloid, a protein fragment that clumps up in the brains of people with Alzheimer’s disease. (The drug’s approval was controversial, and it’s still not clear whether it actually slows progression of the disease.) After the infusion, the researchers treated specific regions of the patients’ brains with focused ultrasound, but just on one side. That allowed them to use the other half of the brain as a control. PET scans revealed a greater reduction in amyloid plaques in the ultrasound-treated regions than in those same regions on the untreated side of the brain, suggesting that more of the antibody was getting into the brain on the treated side.

by Sean O’Kane — techcrunch — Hertz is selling off a third of its electric vehicle fleet, which is predominantly made up of Teslas, and will buy gas cars with some of the money it makes from the sales. The company cited lower demand for EVs and higher-than-expected repair costs as reasons for the decision. […]

by Brandon Vigliarolo – theregister — A mere 700 IT jobs were added in the US last year compared to 267,000 the year prior, it’s claimed. It’d be easy to blame layoffs, but that’s not all there is to it, says tech consultancy Janco Associates. Based on analysis of US Bureau of Labor Statistics data by Janco, news that the IT industry added just 700 jobs following an estimated 262,242 jobs lost amid mass layoffs is shocking, but not surprising. Yet while layoffs have generally kept IT job growth flat for the past year (2023’s net 700 comes despite more than 21,000 IT jobs being created in Q4), there’s still a surplus of vacant roles, with Janco finding some 88,000 remain open. “Based on our analysis, the IT job market and opportunities for IT professionals are poor at best,” said Janco CEO M Victor Janulaitis. “Currently, there are almost 100K unfilled jobs with over 101K unemployed IT Pros – a skills mismatch.”

In other words, while we’re definitely dealing with correction from pandemic overhiring, we’re also wading into a new paradigm where a lot of tech talent is going to have to retrain because AI is being crammed wherever C-level employees can stick it. A one-two punch to the IT jobs market Much of the layoff debt to hit IT jobs have come to entry-level positions, especially those in the customer service telecommunications and hosting automation areas. In turn, some of the responsibilities of those jobs are being reassigned to the latest and greatest AIs, says Janco.

Xerox prints pink slips for 15% of workforce Intel trims a few hundred workers in Cali just in time for Christmas 17% of Spotify employees face the music in latest cost-cutting shuffle Game over for ByteDance’s big video game studio dream? According to thge tech consultancy, entry-level IT demand is shrinking, though demand for those with AI, security, development, and blockchain skills remain desired. “Artificial Intelligence and Machine Learning IT Professionals remain in high demand,” said Janulaitis. Still, plans to further replace humans with AI workers at the entry level are hardly far-fetched, with multiple reports finding much the same.

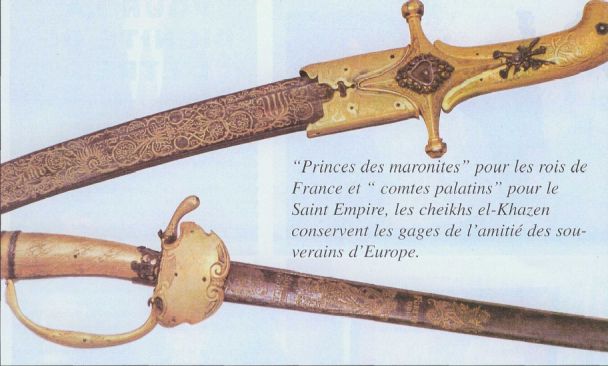

By ASSOCIATED PRESS – Departure and arrival information was replaced by a message accusing the group of putting Lebanon at risk of an all-out war with Israel. The information display screens at Beirut’s international airport were hacked by domestic anti-Hezbollah groups Sunday, as clashes between the Lebanese militant group and the Israeli military continue to intensify along the border. Departure and arrival information was replaced by a message accusing the Hezbollah group of putting Lebanon at risk of an all-out war with Israel. The screens displayed a message with logos from a hardline Christian group dubbed Soldiers of God, which has garnered attention over the past year for its campaigns against the LGBTQ+ community in Lebanon, and a little-known group that calls itself The One Who Spoke. In a video statement, the Christian group denied its involvement, while the other group shared photos of the screens on its social media channels. “Hassan Nasrallah, you will no longer have supporters if you curse Lebanon with a war for which you will bear responsibility and consequences,” the message read, echoing similar sentiments to critics over the years who have accused Hezbollah of smuggling weapons and munitions through the tiny Mediterranean country’s only civilian airport. Hezbollah has been striking Israeli military bases and positions near the country’s northern border with Lebanon since Oct. 8, the day after the Hamas-Israel war in Gaza began. Israel has been striking Hezbollah positions in return.

The near-daily clashes have intensified sharply over the past week, after an apparent Israeli strike in a southern Beirut suburb killed top Hamas official and commander Saleh Arouri. In a speech on Saturday, Hezbollah leader Sayyed Hassan Nasrallah in a speech vowed that the group would retaliate. He dismissed criticisms that the group is looking for a full-scale war with Israel, but said if Israel launches one, Hezbollah is ready for a war “without limits.” Hezbollah announced an “initial response” to Arouri’s killing on Saturday, launching a volley of 62 rockets toward an Israeli air surveillance base on Mount Meron. The Lebanese government and international community have been scrambling to prevent a war in Lebanon, which they fear would spark a regional spillover. Lebanon’s state-run National News Agency said the hack briefly disrupted baggage inspection. Passengers gathered around the screens, taking pictures and sharing them on social media.

by Sharon Goldman — @sharongoldman – venturebeat — Disinformation concerns may be growing over the use of AI in the 2024 U.S. elections, but that isn’t stopping AI voice cloning startups from getting into the political game. For example, the Boca Raton, Florida-based Instreamatic, an AI audio/video ad platform that raised a $6.1 million Series […]

By Douglas Heaven — MIT review — This time last year we did something reckless. In an industry where nothing stands still, we had a go at predicting the future. How did we do? Our four big bets for 2023 were that the next big thing in chatbots would be multimodal (check: the most powerful large language models out there, OpenAI’s GPT-4 and Google DeepMind’s Gemini, work with text, images and audio); that policymakers would draw up tough new regulations (check: Biden’s executive order came out in October and the European Union’s AI Act was finally agreed in December); Big Tech would feel pressure from open-source startups (half right: the open-source boom continues, but AI companies like OpenAI and Google DeepMind still stole the limelight); and that AI would change big pharma for good (too soon to tell: the AI revolution in drug discovery is still in full swing, but the first drugs developed using AI are still some years from market).

Now we’re doing it again.

We decided to ignore the obvious. We know that large language models will continue to dominate. Regulators will grow bolder. AI’s problems—from bias to copyright to doomerism—will shape the agenda for researchers, regulators, and the public, not just in 2024 but for years to come. (Read more about our six big questions for generative AI here.) Instead, we’ve picked a few more specific trends. Here’s what to watch out for in 2024. (Come back next year and check how we did.)

Customized chatbots